To provide AI-focused girls teachers and others their well-deserved — and overdue — time within the highlight, TechCrunch has been publishing a series of interviews centered on outstanding girls who’ve contributed to the AI revolution. We’re publishing these items all year long because the AI growth continues, highlighting key work that always goes unrecognized. Learn extra profiles here.

Within the highlight at present: Allison Cohen, the senior utilized AI initiatives supervisor at Mila, a Quebec-based neighborhood of greater than 1,200 researchers specializing in AI and machine studying. She works with researchers, social scientists and exterior companions to deploy socially helpful AI initiatives. Cohen’s portfolio of labor features a software that detects misogyny, an app to determine on-line exercise from suspected human trafficking victims, and an agricultural app to suggest sustainable farming practices in Rwanda.

Beforehand, Cohen was a co-lead on AI drug discovery on the International Partnership on Synthetic Intelligence, a company to information the accountable improvement and use of AI. She’s additionally served as an AI technique guide at Deloitte and a undertaking guide on the Middle for Worldwide Digital Coverage, an unbiased Canadian assume tank.

Q&A

Briefly, how did you get your begin in AI? What attracted you to the sphere?

The conclusion that we might mathematically mannequin all the things from recognizing faces to negotiating commerce offers modified the way in which I noticed the world, which is what made AI so compelling to me. Satirically, now that I work in AI, I see that we are able to’t — and in lots of circumstances shouldn’t — be capturing these sorts of phenomena with algorithms.

I used to be uncovered to the sphere whereas I used to be finishing a grasp’s in international affairs on the College of Toronto. This system was designed to show college students to navigate the programs affecting the world order — all the things from macroeconomics to worldwide legislation to human psychology. As I realized extra about AI, although, I acknowledged how important it could turn into to world politics, and the way vital it was to coach myself on the subject.

What allowed me to interrupt into the sphere was an essay-writing competitors. For the competitors, I wrote a paper describing how psychedelic medicine would assist people keep aggressive in a labor market riddled with AI, which certified me to attend the St. Gallen Symposium in 2018 (it was a artistic writing piece). My invitation, and subsequent participation in that occasion, gave me the arrogance to proceed pursuing my curiosity within the subject.

What work are you most happy with within the AI subject?

One of many initiatives I managed concerned constructing a dataset containing situations of delicate and overt expressions of bias towards girls.

For this undertaking, staffing and managing a multidisciplinary crew of pure language processing specialists, linguists and gender research specialists all through the whole undertaking life cycle was essential. It’s one thing that I’m fairly happy with. I realized firsthand why this course of is prime to constructing accountable functions, and in addition why it’s not carried out sufficient — it’s onerous work! In the event you can help every of those stakeholders in speaking successfully throughout disciplines, you may facilitate work that blends decades-long traditions from the social sciences and cutting-edge developments in laptop science.

I’m additionally proud that this undertaking was properly acquired by the neighborhood. One among our papers bought a highlight recognition within the socially accountable language modeling workshop at one of many main AI conferences, NeurIPS. Additionally, this work impressed an analogous interdisciplinary course of that was managed by AI Sweden, which tailored the work to suit Swedish notions and expressions of misogyny.

How do you navigate the challenges of the male-dominated tech trade and, by extension, the male-dominated AI trade?

It’s unlucky that in such a cutting-edge trade, we’re nonetheless seeing problematic gender dynamics. It’s not simply adversely affecting girls — all of us are dropping. I’ve been fairly impressed by an idea referred to as “feminist standpoint theory” that I realized about in Sasha Costanza-Chock’s ebook, “Design Justice.”

The idea claims that marginalized communities, whose information and experiences don’t profit from the identical privileges as others, have an consciousness of the world that may result in honest and inclusive change. In fact, not all marginalized communities are the identical, and neither are the experiences of people inside these communities.

That stated, a wide range of views from these teams are vital in serving to us navigate, problem and dismantle all types of structural challenges and inequities. That’s why a failure to incorporate girls can maintain the sphere of AI exclusionary for a fair wider swath of the inhabitants, reinforcing energy dynamics outdoors of the sphere as properly.

When it comes to how I’ve dealt with a male-dominated trade, I’ve discovered allies to be fairly vital. These allies are a product of sturdy and trusting relationships. For instance, I’ve been very lucky to have mates like Peter Kurzwelly, who’s shared his experience in podcasting to help me within the creation of a female-led and -centered podcast referred to as “The World We’re Building.” This podcast permits us to raise the work of much more girls and non-binary individuals within the subject of AI.

What recommendation would you give to girls in search of to enter the AI subject?

Discover an open door. It doesn’t should be paid, it doesn’t should be a profession and it doesn’t even should be aligned together with your background or expertise. If yow will discover a gap, you need to use it to hone your voice within the area and construct from there. In the event you’re volunteering, give it your all — it’ll can help you stand out and hopefully receives a commission on your work as quickly as potential.

In fact, there’s privilege in with the ability to volunteer, which I additionally need to acknowledge.

After I misplaced my job throughout the pandemic and unemployment was at an all-time excessive in Canada, only a few corporations had been seeking to rent AI expertise, and people who had been hiring weren’t searching for international affairs college students with eight months’ expertise in consulting. Whereas making use of for jobs, I started volunteering with an AI ethics group.

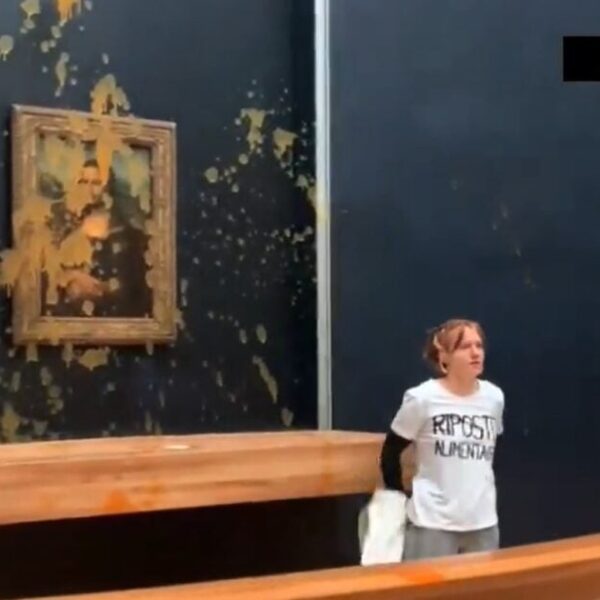

One of many initiatives I labored on whereas volunteering was about whether or not there ought to be copyright safety for artwork produced by AI. I reached out to a lawyer at a Canadian AI legislation agency to raised perceive the area. She linked me with somebody at CIFAR, who linked me with Benjamin Prud’homme, the manager director of Mila’s AI for Humanity Staff. It’s wonderful to assume that by way of a collection of exchanges about AI artwork, I realized a couple of profession alternative that has since remodeled my life.

What are a few of the most urgent points going through AI because it evolves?

I’ve three solutions to this query which can be considerably interconnected. I believe we have to determine:

- Easy methods to reconcile the truth that AI is constructed to be scaled whereas guaranteeing that the instruments we’re constructing are tailored to suit native information, expertise and wishes.

- If we’re to construct instruments which can be tailored to the native context, we’re going to want to include anthropologists and sociologists into the AI design course of. However there are a plethora of incentive buildings and different obstacles stopping significant interdisciplinary collaboration. How can we overcome this?

- How can we have an effect on the design course of much more profoundly than merely incorporating multidisciplinary experience? Particularly, how can we alter the incentives such that we’re designing instruments constructed for individuals who want it most urgently somewhat than these whose information or enterprise is most worthwhile?

What are some points AI customers ought to concentrate on?

Labor exploitation is likely one of the points that I don’t assume will get sufficient protection. There are a lot of AI fashions that study from labeled information utilizing supervised studying strategies. When the mannequin depends on labeled information, there are those that have to do that tagging (i.e., somebody provides the label “cat” to a picture of a cat). These individuals (annotators) are sometimes the themes of exploitative practices. For fashions that don’t require the information to be labeled throughout the coaching course of (as is the case with some generative AI and different basis fashions), datasets can nonetheless be constructed exploitatively in that the builders typically don’t receive consent nor present compensation or credit score to the information creators.

I’d suggest testing the work of Krystal Kauffman, who I used to be so glad to see featured on this TechCrunch collection. She’s making headway in advocating for annotators’ labor rights, together with a dwelling wage, the tip to “mass rejection” practices, and engagement practices that align with elementary human rights (in response to developments like intrusive surveillance).

What’s the easiest way to responsibly construct AI?

People typically look to moral AI rules with a view to declare that their expertise is accountable. Sadly, moral reflection can solely start after plenty of choices have already been made, together with however not restricted to:

- What are you constructing?

- How are you constructing it?

- How will or not it’s deployed?

In the event you wait till after these choices have been made, you’ll have missed numerous alternatives to construct accountable expertise.

In my expertise, the easiest way to construct accountable AI is to be cognizant of — from the earliest phases of your course of — how your drawback is outlined and whose pursuits it satisfies; how the orientation helps or challenges pre-existing energy dynamics; and which communities might be empowered or disempowered by way of the AI’s use.

If you wish to create significant options, you need to navigate these programs of energy thoughtfully.

How can traders higher push for accountable AI?

Ask in regards to the crew’s values. If the values are outlined, not less than, partly, by the area people and there’s a level of accountability to that neighborhood, it’s extra probably that the crew will incorporate accountable practices.