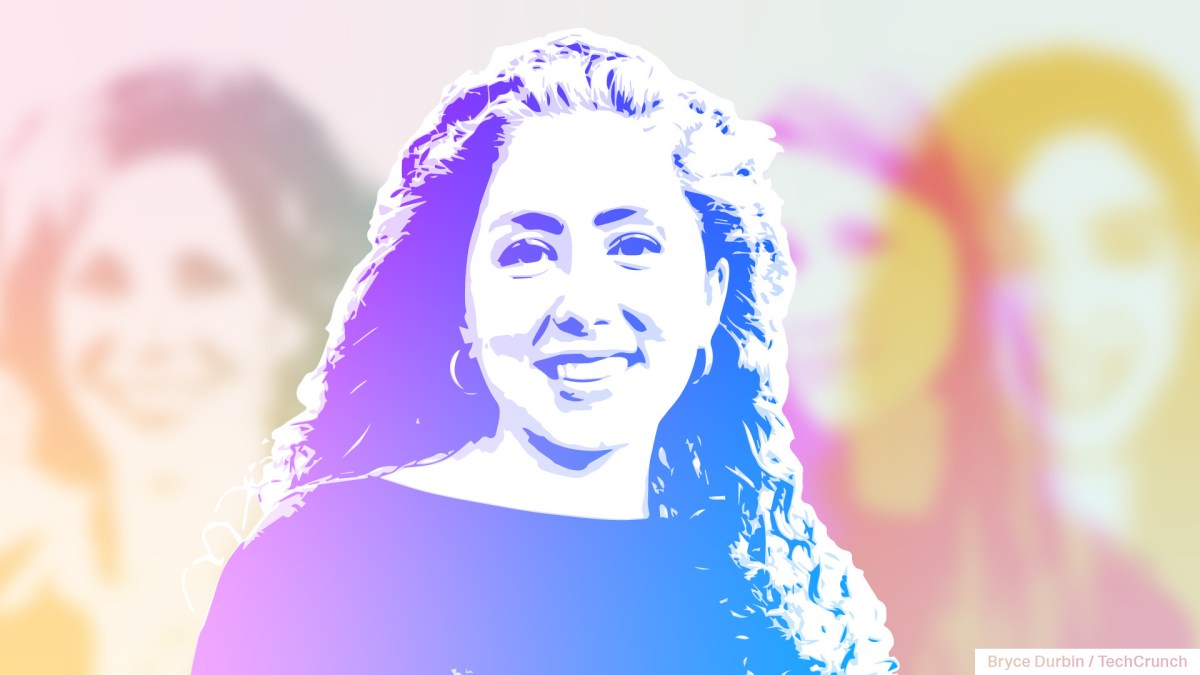

To provide AI-focused ladies teachers and others their well-deserved — and overdue — time within the highlight, TechCrunch is launching a series of interviews specializing in exceptional ladies who’ve contributed to the AI revolution. We’ll publish a number of items all year long because the AI increase continues, highlighting key work that usually goes unrecognized. Learn extra profiles here.

Claire Leibowicz is the pinnacle of the AI and media integrity program on the Partnership on AI (PAI), the business group backed by Amazon, Meta, Google, Microsoft and others dedicated to the “responsible” deployment of AI tech. She additionally oversees PAI’s AI and media integrity steering committee.

In 2021, Leibowicz was a journalism fellow at Pill Journal, and in 2022, she was a fellow at The Rockefeller Basis’s Bellagio Heart centered on AI governance. Leibowicz — who holds a BA in psychology and laptop science from Harvard and a grasp’s diploma from Oxford — has suggested firms, governments and nonprofit organizations on AI governance, generative media and digital info.

Q&A

Briefly, how did you get your begin in AI? What attracted you to the sector?

It might appear paradoxical, however I got here to the AI area from an curiosity in human habits. I grew up in New York, and I used to be all the time captivated by the various methods folks there work together and the way such a various society takes form. I used to be interested by large questions that have an effect on reality and justice, like how will we select to belief others? What prompts intergroup battle? Why do folks consider sure issues to be true and never others? I began out exploring these questions in my educational life by cognitive science analysis, and I rapidly realized that expertise was affecting the solutions to those questions. I additionally discovered it intriguing how synthetic intelligence may very well be a metaphor for human intelligence.

That introduced me into laptop science school rooms the place college — I’ve to shout out Professor Barbara Grosz, who’s a trailblazer in pure language processing, and Professor Jim Waldo, who blended his philosophy and laptop science background — underscored the significance of filling their school rooms with non-computer science and -engineering majors to deal with the social influence of applied sciences, together with AI. And this was earlier than “AI ethics” was a definite and common area. They made clear that, whereas technical understanding is helpful, expertise impacts huge realms together with geopolitics, economics, social engagement and extra, thereby requiring folks from many disciplinary backgrounds to weigh in on seemingly technological questions.

Whether or not you’re an educator occupied with how generative AI instruments have an effect on pedagogy, a museum curator experimenting with a predictive route for an exhibit or a health care provider investigating new picture detection strategies for studying lab reviews, AI can influence your area. This actuality, that AI touches many domains, intrigued me: there was mental selection inherent to working within the AI area, and this introduced with it an opportunity to influence many sides of society.

What work are you most happy with (within the AI area)?

I’m happy with the work in AI that brings disparate views collectively in a stunning and action-oriented method — that not solely accommodates, however encourages, disagreement. I joined the PAI because the group’s second employees member six years in the past, and sensed instantly the group was trailblazing in its dedication to various views. PAI noticed such work as an important prerequisite to AI governance that mitigates hurt and results in sensible adoption and influence within the AI area. This has confirmed true, and I’ve been heartened to assist form PAI’s embrace of multidisciplinarity and watch the establishment develop alongside the AI area.

Our work on artificial media over the previous six years began effectively earlier than generative AI turned a part of the general public consciousness, and exemplifies the chances of multistakeholder AI governance. In 2020, we labored with 9 totally different organizations from civil society, business and media to form Fb’s Deepfake Detection Challenge, a machine studying competitors for constructing fashions to detect AI-generated media. These outdoors views helped form the equity and objectives of the profitable fashions — exhibiting how human rights specialists and journalists can contribute to a seemingly technical query like deepfake detection. Final 12 months, we revealed a normative set of steerage on accountable artificial media — PAI’s Responsible Practices for Synthetic Media — that now has 18 supporters from extraordinarily totally different backgrounds, starting from OpenAI to TikTok to Code for Africa, Bumble, BBC and WITNESS. Having the ability to put pen to paper on actionable steerage that’s knowledgeable by technical and social realities is one factor, however it’s one other to really get institutional help. On this case, establishments dedicated to offering transparency reviews about how they navigate the artificial media area. AI initiatives that characteristic tangible steerage, and present learn how to implement that steerage throughout establishments, are among the most significant to me.

How do you navigate the challenges of the male-dominated tech business, and, by extension, the male-dominated AI business?

I’ve had each fantastic female and male mentors all through my profession. Discovering individuals who concurrently help and problem me is essential to any development I’ve skilled. I discover that specializing in shared pursuits and discussing the questions that animate the sector of AI can convey folks with totally different backgrounds and views collectively. Apparently, PAI’s group is made up of greater than half ladies, and most of the organizations engaged on AI and society or accountable AI questions have many ladies on employees. That is typically in distinction to these engaged on engineering and AI analysis groups, and is a step in the proper course for illustration within the AI ecosystem.

What recommendation would you give to ladies searching for to enter the AI area?

As I touched on within the earlier query, among the primarily male-dominated areas inside AI that I’ve encountered have additionally been these which are probably the most technical. Whereas we should always not prioritize technical acumen over different types of literacy within the AI area, I’ve discovered that having technical coaching has been a boon to each my confidence, and effectiveness, in such areas. We want equal illustration in technical roles and an openness to the experience of parents who’re specialists in different fields like civil rights and politics which have extra balanced illustration. On the similar time, equipping extra ladies with technical literacy is essential to balancing illustration within the AI area.

I’ve additionally discovered it enormously significant to attach with ladies within the AI area who’ve navigated balancing household {and professional} life. Discovering function fashions to speak to about large questions associated to profession and parenthood — and among the distinctive challenges ladies nonetheless face at work — has made me really feel higher geared up to deal with some these challenges as they come up.

What are among the most urgent points dealing with AI because it evolves?

The questions of reality and belief on-line — and offline — change into more and more difficult as AI evolves. As content material starting from photos to movies to textual content could be AI-generated or modified, is seeing nonetheless believing? How can we depend on proof if paperwork can simply and realistically be doctored? Can we now have human-only areas on-line if it’s extraordinarily simple to mimic an actual particular person? How will we navigate the tradeoffs that AI presents between free expression and the chance that AI techniques could cause hurt? Extra broadly, how will we guarantee the knowledge atmosphere shouldn’t be solely formed by a choose few firms and people working for them however incorporates the views of stakeholders from around the globe, together with the general public?

Alongside these particular questions, PAI has been concerned in different sides of AI and society, together with how we contemplate equity and bias in an period of algorithmic choice making, how labor impacts and is impacted by AI, learn how to navigate accountable deployment of AI techniques and even learn how to make AI techniques extra reflective of myriad views. At a structural stage, we should contemplate how AI governance can navigate huge tradeoffs by incorporating assorted views.

What are some points AI customers ought to concentrate on?

First, AI customers ought to know that if one thing sounds too good to be true, it most likely is.

The generative AI increase over the previous 12 months has, after all, mirrored huge ingenuity and innovation, however it has additionally led to public messaging round AI that’s typically hyperbolic and inaccurate.

AI customers also needs to perceive that AI shouldn’t be revolutionary, however exacerbating and augmenting current issues and alternatives. This doesn’t imply they need to take AI much less critically, however relatively use this data as a useful basis for navigating an more and more AI-infused world. For instance, if you’re involved about the truth that folks may mis-contextualize a video earlier than an election by altering the caption, you have to be involved in regards to the velocity and scale at which they’ll mislead utilizing deepfake expertise. If you’re involved about the usage of surveillance within the office, you also needs to contemplate how AI will make such surveillance simpler and extra pervasive. Sustaining a wholesome skepticism in regards to the novelty of AI issues, whereas additionally being trustworthy about what’s distinct in regards to the present second, is a useful body for customers to convey to their encounters with AI.

What’s one of the best ways to responsibly construct AI?

Responsibly constructing AI requires us to broaden our notion of who performs a job in “building” AI. In fact, influencing expertise firms and social media platforms is a key option to have an effect on the influence of AI techniques, and these establishments are very important to responsibly constructing expertise. On the similar time, we should acknowledge how various establishments from throughout civil society, business, media, academia and the general public should proceed to be concerned to construct accountable AI that serves the general public curiosity.

Take, for instance, the accountable growth and deployment of artificial media.

Whereas expertise firms is perhaps involved about their duty when navigating how an artificial video can affect customers earlier than an election, journalists could also be frightened about imposters creating artificial movies that purport to return from their trusted information model. Human rights defenders would possibly contemplate duty associated to how AI-generated media reduces the influence of movies as proof of abuses. And artists is perhaps excited by the chance to specific themselves by generative media, whereas additionally worrying about how their creations is perhaps leveraged with out their consent to coach AI fashions that produce new media. These various issues present how very important it’s to contain totally different stakeholders in initiatives and efforts to responsibly construct AI, and the way myriad establishments are affected by — and affecting — the best way AI is built-in into society.

How can buyers higher push for accountable AI?

Years in the past, I heard DJ Patil, the previous chief knowledge scientist within the White Home, describe a revision to the pervasive “move fast and break things” mantra of the early social media period that has caught with me. He steered the sector “move purposefully and fix things.”

I beloved this as a result of it didn’t suggest stagnation or an abandonment of innovation, however intentionality and the chance that one may innovate whereas embracing duty. Traders ought to assist induce this mentality — permitting extra time and house for his or her portfolio firms to bake in accountable AI practices with out stifling progress. Oftentimes, establishments describe restricted time and tight deadlines because the limiting issue for doing the “right” factor, and buyers is usually a main catalyst for altering this dynamic.

The extra I’ve labored in AI, the extra I’ve discovered myself grappling with deeply humanistic questions. And these questions require all of us to reply them.