Nvidia, ever eager to incentivize purchases of its newest GPUs, is releasing a device that lets homeowners of GeForce RTX 30 Collection and 40 Collection playing cards run an AI-powered chatbot offline on a Home windows PC.

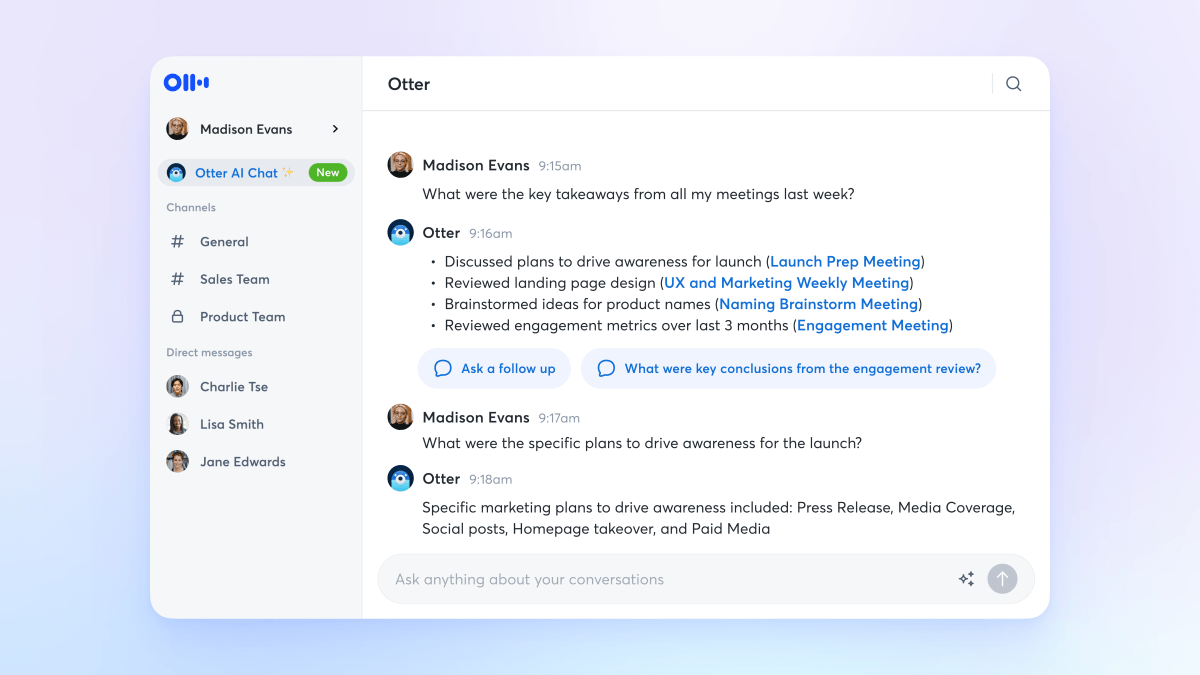

Known as Chat with RTX, the device permits customers to customise a GenAI mannequin alongside the traces of OpenAI’s ChatGPT by connecting it to paperwork, recordsdata and notes that it will possibly then question.

“Rather than searching through notes or saved content, users can simply type queries,” Nvidia writes in a weblog submit. “For example, one could ask, ‘What was the restaurant my partner recommended while in Las Vegas?’ and Chat with RTX will scan local files the user points it to and provide the answer with context.”

Chat with RTX defaults to AI startup Mistral’s open source model however helps different text-based fashions together with Meta’s Llama 2. Nvidia warns that downloading all the mandatory recordsdata will eat up a good quantity of storage — 50GB to 100GB, relying on the mannequin(s) chosen.

Presently, Chat with RTX works with textual content, PDF, .doc and .docx and .xml codecs. Pointing the app at a folder containing any supported recordsdata will load the recordsdata into the mannequin’s fine-tuning knowledge set. As well as, Chat with RTX can take the URL of a YouTube playlist to load transcriptions of the movies within the playlist, enabling whichever mannequin’s chosen to question their contents.

Now, there’s sure limitations to bear in mind, which Nvidia to its credit score outlines in a how-to information.

Picture Credit: Nvidia

Chat with RTX can’t bear in mind context, that means that the app gained’t bear in mind any earlier questions when answering follow-up questions. For instance, should you ask “What’s a common bird in North America?” and observe that up with “What are its colors?,” Chat with RTX gained’t know that you simply’re speaking about birds.

Nvidia additionally acknowledges that the relevance of the app’s responses may be affected by a spread of things, some simpler to manage for than others — together with the query phrasing, the efficiency of the chosen mannequin and the dimensions of the fine-tuning knowledge set. Asking for information lined in a few paperwork is prone to yield higher

outcomes than asking for a abstract of a doc or set of paperwork. And response high quality will typically enhance with bigger knowledge units — as will pointing Chat with RTX at extra content material a few particular topic, Nvidia says.

So Chat with RTX is extra a toy than something for use in manufacturing. Nonetheless, there’s one thing to be mentioned for apps that make it simpler to run AI fashions domestically — which is one thing of a rising development.

In a latest report, the World Financial Discussion board predicted a “dramatic” development in inexpensive units that may run GenAI fashions offline, together with PCs, smartphones, web of issues units and networking tools. The explanations, the WEF mentioned, are the clear advantages: not solely are offline fashions inherently extra personal — the information they course of by no means leaves the machine they run on — however they’re decrease latency and more economical than cloud-hosted fashions.

In fact, democratizing instruments to run and prepare fashions opens the door to malicious actors — a cursory Google Search yields many listings for fashions fine-tuned on poisonous content material from unscrupulous corners of the online. However proponents of apps like Chat with RTX argue that the advantages outweigh the harms. We’ll have to attend and see.