spxChrome

Prelude

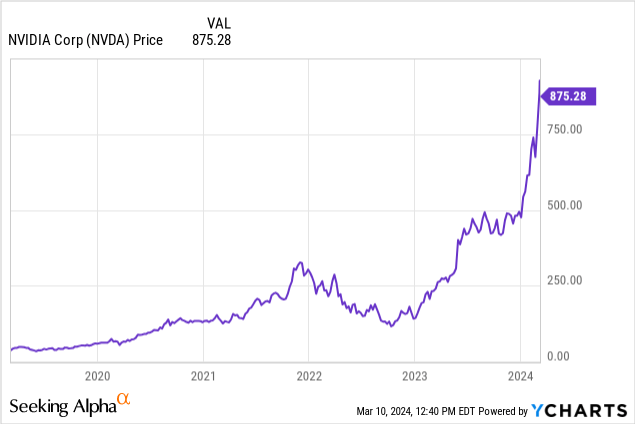

When a inventory chart appears to be like like that of NVIDIA Company (NASDAQ:NVDA), buyers rightfully turn into cautious of buying extra shares. Clearly, one of the best time to purchase lately was mid-2022, however that was earlier than the ChatGPT launch and a time of intense concern of recession. It was something however clear for the time being.

Many analysts, myself included, did not even imagine within the first leg of the rally. In one among my worst suggestions since becoming a member of In search of Alpha, I instructed readers to sell the irrational exuberance in Could 2023. Nvidia inventory is up 130% since that article.

I made the traditional mistake of utilizing backward-looking valuation metrics to research an organization with mouth-watering progress prospects. By November 2023, I flipped my opinion. I coated NVIDIA Company (NVDA) once more, this time with a Purchase suggestion, and the inventory has been up 80% since then.

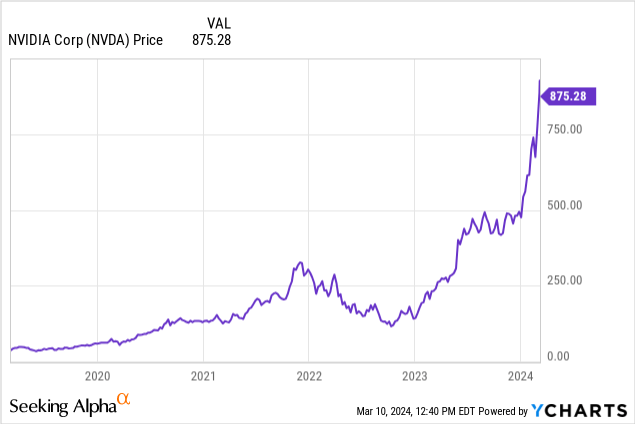

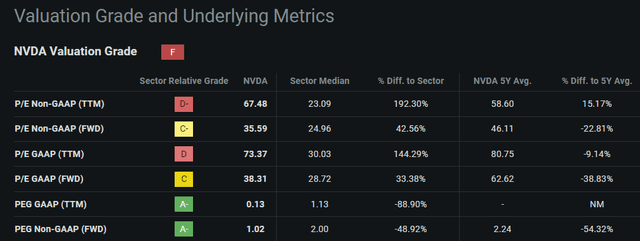

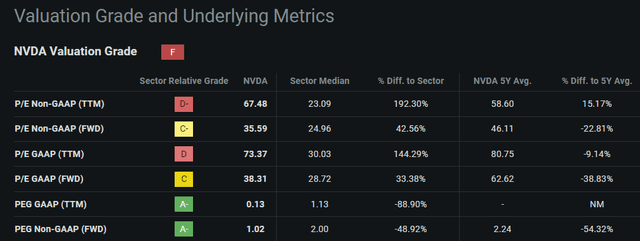

All through all of 2023, certainly even now, Nvidia’s progress estimates yield cheap forward-looking valuation metrics:

In search of Alpha NVDA Quant Valuation

Paying 35x earnings for an organization that grew income 126% YoY in its most up-to-date report is cheap. Nvidia’s Q1 FY 2025 information of $24b suggests 8.5% QoQ progress, and analysts count on full-year income to achieve $110.2b within the January 2025 report, suggesting 81% progress from the $60.9b current mark.

By 2027, 20 analysts count on Nvidia’s revenues to achieve $150b, which might symbolize a 35% CAGR from the current report.

In search of Alpha NVDA Earnings Tab

Nvidia’s progress story is compelling, however the funding case stays troublesome. Parabolic value motion is horrifying to purchase into, however forward-looking valuations make the present value look solely cheap.

On this article, I would wish to weigh the elements underlying Nvidia’s progress outlook and current bear and bull circumstances for investing in Nvidia at present costs.

Since I will be taking each side of the argument right here, I am ranking Nvidia a Maintain. For full transparency, I’ve been cost-averaging into Nvidia since mid-2023 and neither plan to promote nor cease accumulating shares. I imagine Nvidia is without doubt one of the world’s finest companies, and the market is essentially pricing that sentiment in.

With that, let’s dive into the bull and bear circumstances for Nvidia inventory.

The Bull Case

The bull case is sort of easy: progress. Utilizing the knowledge I detailed above, some would even classify Nvidia as a progress at an affordable value, GARP, play.

Nvidia provides industry-leading AI options. Synthetic intelligence has taken the world by storm for the reason that late 2022 launch of ChatGPT, and main cloud service suppliers and enterprises alike are scrambling to acquire sufficient GPUs or GPU capability to commercialize AI at scale. This scrambling has benefitted no another than Nvidia, who has a deep aggressive benefit in AI options.

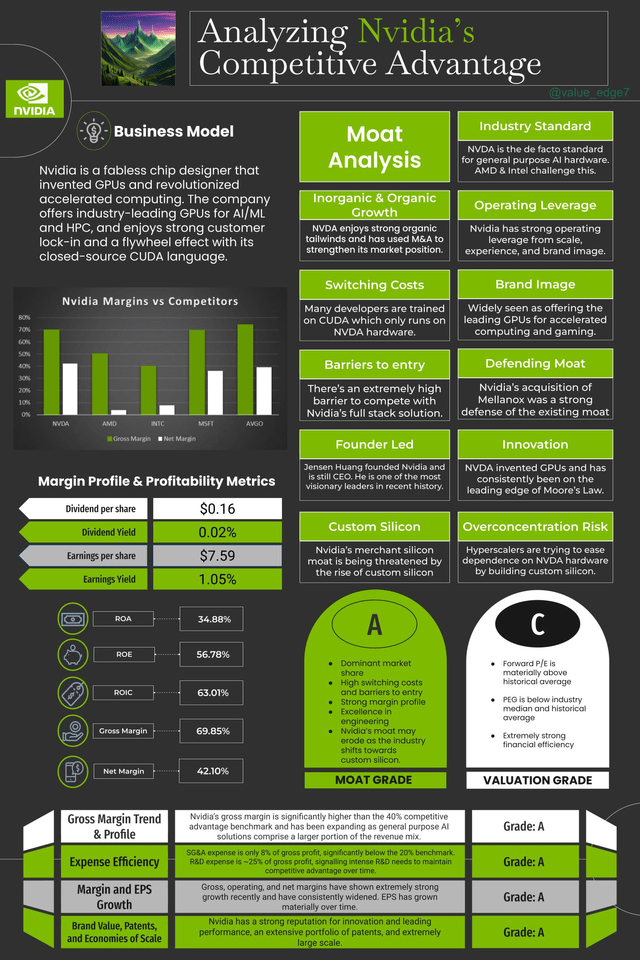

Bull Case #1: Nvidia’s Full Stack Moat

Nvidia’s deep aggressive benefit in AI functions made their full-stack resolution the one choice. Monetary outcomes present this, there is not a single firm benefitting from AI spend as a lot as Nvidia. Nvidia’s GPU programming software program, CUDA, which may solely run on Nvidia {hardware}, has essentially the most sturdy developer ecosystem and touts essentially the most intensive set of AI libraries within the {industry}. The one severe competitor to that is Superior Micro Gadgets, Inc. (AMD), with its MI300X accelerator and open-source ROCm programming software program.

GPU programming permits engineers to optimize chip efficiency for his or her particular use circumstances. The workhorse of Nvidia GPUs is called an SM or Streaming Multiprocessor. CUDA does a number of essential issues: 1) it optimizes reminiscence learn path, which decreases reminiscence latency; 2) it oversubscribes SMs to optimize reminiscence latency; and three) it defines knowledge buildings into parallel processing blocks and assigns these blocks to SMs, so the GPU can carry out duties in parallel, defeating the reminiscence bandwidth bottleneck. I talk about this in additional element in a recent post on X.

Nvidia started creating CUDA in 2007, 10 years earlier than AMD launched ROCm, and consequently, has by far one of the best ecosystem. The Mellanox acquisition gave Nvidia industry-leading fiber channel networking capabilities, rounding out the full-stack hardware-software-networking AI accelerator platform. Nvidia’s stack is unmatched which explains why the H100 has turn into the industry-standard chip for AI functions.

I put collectively this graphic as a useful resource:

This is not your entire bull case, although. Nvidia’s true bull case is barely of supply-demand rebalancing.

Bull Case #2: Provide-Demand Equilibrium

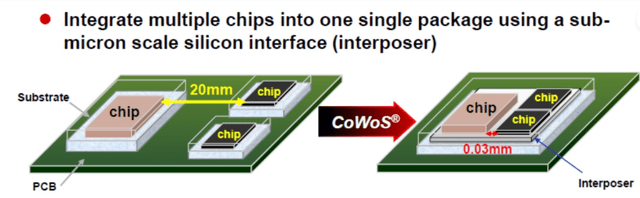

All through all of 2023, Nvidia’s progress was hamstrung by a provide crunch. The supply of the availability crunch was the superior packaging technique used for H100s.

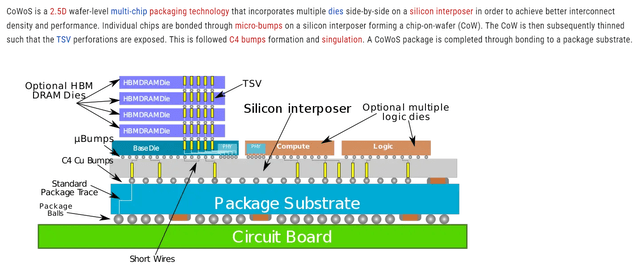

H100s use the Taiwan Semiconductor Manufacturing Firm Restricted (TSM) aka TSMC “oWoS” packaging design. That is “chip-on-wafer-on-substrate.” It is a packaging technique designed to boost chip efficiency with out essentially rising transistor density.

Because the title suggests, this entails stacking a number of silicon chips atop one other silicon wafer. This primary layer permits for simpler interconnection of particular person chips by way of the silicon wafer, enhancing efficiency on the identical die measurement.

Then, the stacked chip-on-wafer is positioned on an interposer which sits atop the substrate materials.

Excessive bandwidth reminiscence, HBM, was another excuse that CoWoS packaging was crucial. Constructing HBM entails stacking a number of DRAM (dynamic random entry reminiscence) chips atop one another, drilling holes in them known as TSVs (by way of silicon vias), and connecting the chips collectively by operating interconnects by way of the TSVs.

So, logic and reminiscence chips are positioned collectively on a wafer. Quite a few interconnects could also be constructed into this wafer to permit for chip-to-chip communication. Then, this CoW construction is positioned atop a packaging substrate and linked by way of soldering balls onto the PCB, printed circuit board.

CoWoS packaging permits Nvidia to pack far more efficiency into the identical die measurement with out shrinking transistor measurement or rising transistor density.

The tradeoff right here is course of complexity, and subsequently manufacturing capability. TSMC was terribly underprepared for the demand shock attributable to ChatGPT, and Nvidia merely could not purchase sufficient chips to satisfy demand. This provide imbalance continues to be felt – CFO Colette Kress said the company expects B100, the next-generation AI accelerator, to be provide constrained as nicely. There is just too a lot demand.

The availability crunch did two issues: 1) gave Nvidia extraordinarily robust pricing energy with H100s; and a pair of) hamstrung Nvidia’s progress in 2023. Sure, with out provide constraints Nvidia would have bought far more GPUs in 2023.

TSMC has ramped its CoWoS manufacturing capability up considerably since early 2023, and Nvidia will get pleasure from extra balanced provide/demand transferring ahead. This might result in costs coming down, however given the extraordinary demand, elevated unit gross sales will greater than make up for this. Certainly, provide assembly demand might result in Nvidia beating the already ludicrous progress expectations.

The following query is then, how sustainable is that this demand? The semiconductor ecosystem could be very cyclical, and AI demand will seemingly proceed this pattern. ASML Holding N.V. (ASML) CEO Peter Wennink stated in the recent earnings call that the {industry} is rising from an extended trough that started in the course of the COVID-19 provide shock. He believes 2025 will probably be a 12 months of robust progress for ASML, and this progress ripples all through your entire ecosystem. Extra ASML machines imply extra manufacturing capability for Nvidia chips, which implies extra unit gross sales. Thus, Nvidia’s progress estimates do look cheap for fiscal years 2025 and 2026.

Lastly, the sustainability of AI demand could also be shielded by a few of the most extreme cyclical troughs due to the scaling law. Scaling legal guidelines state that AI inference efficiency will increase as compute capability will increase, with none change to the underlying engineering.

In different phrases, even with out enhancements in mannequin engineering, if corporations simply provide their fashions with extra compute then efficiency will enhance.

This does two issues to demand: 1) it provides all hyperscalers the motivation to purchase as many GPUs as potential or else face the chance of being left behind, and a pair of) as extra compute-intensive fashions get launched (GPT-5, Sora), much more compute capability will probably be required to reap additional scaling advantages.

Certainly, scaling legal guidelines current a really compelling virtuous cycle for AI {hardware} demand.

As companies attempt to distribute AI at scale, like Adobe Inc. (ADBE) with Firefly and Microsoft Company (MSFT) with Copilot, inference demand will proceed rising.

Looking into the unsure future, demand for AI {hardware} actually appears limitless proper now. So long as this demand stays sturdy, Nvidia will probably be a great funding. Progress and innovation will proceed, vendor lock-in will turn into extra extreme, and Nvidia’s flywheel impact will turn into stronger.

Sturdy demand underpins the bullish assumption that progress estimates are achievable, and subsequently the present valuation is cheap.

What if demand falls off a cliff, although? Worse but, what if we have now one other provide shock?

Herein lies the bear case for Nvidia: provide and demand uncertainty.

The Bear Case

After the preliminary launch of ChatGPT in late 2022, a number of essential issues occurred. First, virtually everybody who used it was awe-struck. Myself included. You enter a immediate in human language, and a pc talks again to you with detailed, largely correct responses. It appeared like magic at first. Utilization exploded, and the required compute to run it skyrocketed.

Bear Case #1: Inverse Scaling Regulation Eroding Client Demand

Over time, many frequent customers turned disgruntled. ChatGPT acquired lazy, you’ll feed it an extended doc, and it will solely scan the primary few pages. Ask it to verify a code block, and it corrects a number of traces then tells you to do the remaining. If it had bother analyzing knowledge, it will simply return an error.

Many individuals started speculating that OpenAI was deliberately throttling mannequin efficiency, and eventually, it was discovered that this may occasionally have been the case. Why? They did not have sufficient compute to run inference at scale. They had been affected by the flip facet of the scaling legislation: not sufficient compute decreased mannequin efficiency.

They restricted utilization and made GPT-4 much less efficient. This illustrates fairly properly the reality of the scaling legislation, but additionally highlights the important thing danger in commercializing AI at scale. Can our compute capability sustain with inference demand?

On the floor, it is a demand tailwind for Nvidia. CSPs and AI corporations will seemingly at all times want extra compute. Over time although, this might shift client sentiment to broadly viewing AI as not helpful sufficient. If Microsoft cannot promote sufficient Copilot subscriptions, the economics of stockpiling GPUs will not make as a lot sense. They may reduce {hardware} purchases, and Nvidia demand might face demand headwinds or stock write-downs.

Whereas I do imagine it is a potential state of affairs, I do not suppose that is prone to be a significant headwind. Even when client demand falters, enterprise demand will probably be sturdy. Enterprises have a powerful incentive to make use of AI to lower prices, like Klarna’s recent announcement that AI chatbots dealt with two-thirds of buyer chats in its first month. Subsequently, I don’t imagine the bear case of weak client demand will maintain Nvidia again in the long term.

Bear Case #2: Wariness of Vendor Lock-In

I lately wrote an earnings review on Broadcom. On this report, I touched on Broadcom’s customized silicon enterprise and why it is such a tailwind. On this context, Nvidia is a service provider silicon supplier. Service provider silicon is basic goal {hardware}. Typically, Nvidia GPUs are known as “GPGPUs,” or “General Purpose GPUs.” They’re constructed to be the all the pieces AI accelerator. H100s are one measurement matches all. Because of this CUDA is so essential, it permits for optimization of service provider silicon.

Over time, large tech corporations nonetheless wanted extra compute energy, although. This led to a shift away from service provider silicon towards customized silicon. Since 2009, Broadcom’s customized silicon division has grown working income by greater than 177x. Broadcom’s main prospects are Alphabet Inc. (GOOG), (GOOGL) with its TPUs, and Meta Platforms, Inc. (META) with its MTIA chip. More and more advanced software program functions require an offload of duties from service provider silicon to customized silicon. Customized silicon is purpose-built and application-optimized, it is constructed to do one factor rather well and way more effectively than a GPGPU might do it.

This risk is actual, and Nvidia itself acknowledged it. Nvidia itself is venturing into the customized silicon enterprise. The important thing promoting level of a customized silicon supplier is their IP portfolio, design experience, and relationships with foundries. Nvidia matches the invoice exceedingly nicely and is prone to turn into a significant participant in customized silicon.

Nonetheless, there is a rising narrative that CSPs and different knowledge heart operators have gotten cautious of vendor lock-in with Nvidia. If Nvidia controls the total stack of AI options, large CSPs will lose negotiating energy and will turn into value takers over time.

This has led to many CSPs choosing the customized silicon route – Google and Meta with Broadcom, Microsoft Company (MSFT) is constructing customized chips Cobalt and Maia, Apple Inc.’s (AAPL) “M” and “A” sequence chips have been customized for years, and Amazon.com, Inc. (AMZN) launched customized chips dubbed Trainium and Inferentia.

It is clear that demand for customized silicon options is rising, partly due to inherent GPGPU limitations but additionally from concern of Nvidia gaining an excessive amount of negotiation energy. Nvidia’s customized silicon enterprise might find yourself being a bust for that reason.

Regardless, the information heart of the long run can have a mix of service provider and customized silicon. Nvidia’s demand will stay robust as a result of H100s are principally indispensable for commercializing AI at scale.

Nvidia’s long-run demand roadmap is protected. It has a powerful aggressive benefit and customized silicon will not outright change service provider silicon. Subsequently, I do not imagine these bear circumstances are very compelling but however they’re value monitoring. The ultimate bear case, a provide shock, is essentially the most compelling.

Bear Case #3: Provide Shock

We have talked intimately about Nvidia’s sturdy demand, why it exists, and why it is sustainable. We have additionally talked about Nvidia’s aggressive benefit and the way a provide/demand imbalance saved Nvidia’s gross sales contained all through 2023.

The black swan that is not priced in is a battle between China and Taiwan. Whereas all analysts acknowledge the risk, it is unattainable to foretell what’s going to occur.

One cause China is so intent on taking Taiwan is its chip manufacturing functionality. China has first rate home chip manufacturing capabilities however has been cut out of the EUV supply chain by the U.S. authorities. ASML acquired stress from each the Trump and Biden administrations to limit China from the EUV lithography provide chain. ASML hasn’t shipped any EUV machines to China, reducing China off from the only provider of the one machine able to producing modern chips. EUV lithography is indispensable for modern manufacturing with economical yield.

For a quick interval, China nonetheless appeared to be advancing nicely. The Mate 60 Professional, Huawei’s competitor to the iPhone 14, has a 7 nm chip. I talk about this intimately in an article on Apple. Whereas the processor within the Mate 60 Professional was nonetheless not equal to the 5 nm A16 Bionic chip within the iPhone 14, it shocked many pundits. It was a failure of US sanctions that meant to limit China from producing at this superior of a course of node.

On March eighth, 2024, Fortune reported that SMIC, the China fab that produced the chip, used American WFE tools from Utilized Supplies, Inc. (AMAT) and KLA Company (KLAC). American expertise that seemingly has since been sanctioned. It is unlikely that China will have the ability to procure future generations of WFE machines from AMAT and KLAC. What was as soon as a triumph of Chinese language manufacturing independence has now turn into an embarrassing instance of dependence on sanctioned American expertise.

I doubt Beijing is completely happy about this Fortune article. In reality, I imagine this will increase the chance of geopolitical battle. If Taiwan is positioned into the worst-case state of affairs, a Chinese language invasion, chip exports will virtually definitely lower precipitously.

Nvidia is solely depending on TSMC for its modern GPUs and thus, that is the important thing danger Nvidia faces. Any influence on TSMC’s manufacturing will influence Nvidia in a significant method.

This danger is usually short-term. Though the world relies on Taiwan at present, many governments are subsidizing home {industry} within the pursuit of on-shored provide chains. For instance, Intel Company (INTC) has introduced several manufacturing expansions and marked the first pre-order of ASML’s next-gen lithography machine dubbed EUV Excessive NA. Lengthy-term {industry} tendencies favor Nvidia’s continued progress regardless of important short-term dangers with Taiwan.

Make no mistake although, this danger ought to be taken critically. Even an more and more combative rhetoric between the U.S. and China might flip sentiment on Nvidia. If sentiment waivers, the inventory might expertise a extreme a number of contraction.

This a number of contraction would current a really compelling shopping for alternative, although. Nvidia’s dominance relies on Taiwan at present, however this dependence is easing. All fabless chip designers are aware of this danger and can proceed to work on diversifying their provide chains. Intel may very well be the biggest beneficiary of this and is present process a sweeping transformation in its personal proper.

Conclusion

Nvidia’s story is a triumph of American capitalism. They launched into a dangerous and unsure journey to construct the best-accelerated computing platform, far earlier than there was any significant demand for accelerated computing. Clearly, the corporate has been abundantly rewarded for this danger, and the expertise they’ve constructed is changing into essentially the most vital part to the AI revolution sweeping the globe. Nvidia Company is a superb enterprise that I intend to personal indefinitely till the valuation will get much more overstretched or the expansion thesis falls aside.

This was my longest article so far on In search of Alpha. When you made it this far, I sincerely recognize your consideration. When you agree or disagree with something, or really feel that I’ve missed some vital issues, let’s talk about it within the feedback. If I earned a comply with, I actually recognize the assist. Thanks for studying!